misinformation: public enemy #1 [run by Harriet Carroll, PhD]

what is research and science?

What is research and science?

A common misconception is that science is a bunch of facts, and scientific training is being told these facts and having to accept them. This is quite literally the opposite of science and science training. Ironically, this myth is perpetuated by those who do learn a bunch of “facts” (read: misinformation) and then blindly accept them…maybe they are projecting…

So what is science?

Science is a process of systematically understanding things whilst removing as many sources of bias as possible.

What does this mean?

It means that scientists acknowledge they are inherently biased, and that we, as humans, are pattern seeking creatures (some examples at the end of this post). As such, we can easily get tricked into thinking something cause something else, when actually this was just a coincidence.

Scientific methods have therefore been developed to help prevent us being tricked. If we design a study well, no matter how easy we as humans are to be fooled, the data and results will be at low risk of bias and therefore be more reliable and trustworthy than what we could gauge without them. I will talk about how we do this in other posts.

It is worth noting that no study is perfect or definitive. Nature is a confusing beast and the scientists job is to understand how it works. Each study adds a small bit of understanding and nuance to our current knowledge. All the evidence using various methods is then collated to form a picture of what we think is actually going on. This is known as consilience of inductions.

How are scientists trained?

I will talk about PhDs as this is the main formal way to be trained in science, though of course there are many other routes. This is also based on UK PhD training; other countries do things slightly differently, but they still follow a similar underlying philosophy.

In the UK, most PhD routes involve zero or minimal formal teaching. The PhD student’s job is to answer a research question. This either means replicating other people’s research to see if the result is consistent, amalgamating other people’s research to understand the overall effect of something (systematic reviews or meta-analyses), doing theoretical work to drive new ideas into the field, and/or conducting primary research to help answer the question.

As part of this, students need to read, read, and read some more. They have the impressive task of trying to read and critically evaluate everything that is relevant to their topic in order that they can do a worthy piece of research. Following this, they often need to design and refine their study(ies), carry out the research, analyse the data, and write it all up in a book (their thesis). A lot of PhD-ing can be realising your ideas are wrong and making mistakes. It is a learning journey after all! This is particularly true if the work is quite novel—no one has done this research before so you may not always have a good base knowledge from previous studies on how to test your idea. You might realise half-way through that something that seemed like a good idea is actually skewing your results in a way you did not expect.

For example, one of my PhD projects looked at the effect of sugar at breakfast on health and appetite. It was a very big project, and whilst running the study I realised the effect might be different if people had a sweet tooth or not. I had not thought of this beforehand. After I finished the study, I reanalysed the data according to whether people had a sweet tooth, and found that this may have skewed the results.

Because PhDs often conduct novel research, it means their work helps innovate the field. This is directly the opposite of believing a pre-set list of facts.

***PhDs (and other researchers) are not LEARNING facts, they are CREATING them!***

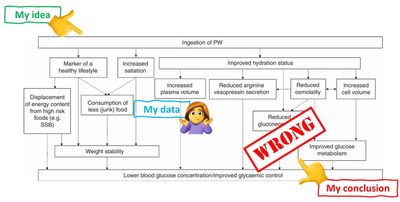

Again to give my own example, another one of my PhD projects found the opposite of all our previous theory, animal work, epidemiology, and other studies. What happened? I wrote a review challenging our current consensus model. This was received well! However, I do not claim my ideas to be right, nor do I claim others to be wrong; rather I offer different ways to test the idea and I await further data.

To sum:

✔ Scientists do not get trained by learning facts. The reason certain ideas stick is because the data overwhelming support that idea, and that idea is useful at predicting things

✔ Scientists do not get roped into believing or perpetuating ideas that support current consensus evidence

✔ Scientists who are innovative drive the field forward. We like it when this happens as it means more new stuff can be learnt

Final note: the scientific method is sound, however some of the structures that surround science, like how we need to compete for funding, are flawed. Often these flaws can cause problems, like stymieing risky innovative projects. I will talk about these problems in other posts, but I want to emphasise here that these issues are separate from the actual scientific method (and using strong scientific methods can help overcome some of these human/social issues).

If you like what I do, feel free to support the work: ko-fi.com/drsmash

Examples of pattern seeking

REGRESSION TO THE MEAN: You have a cold, so you take a vitamin C tablet. Your cold goes away 2 days later. The next time you get a cold, you remember how quickly your cold went after taking the vitamin C tablet, so you take one again. The error here is that you assumed the vitamin C helped reduce the length of your cold. But if you did not take the vitamin C, your cold would have gone anyway. How do you know the vitamin C helped? What if it actually prolonged your illness? Put simply, you do not know. The effect you saw is known as regression to the mean. This idea means that we go back to norm states naturally; i.e. we regress (go back) to our average (not having a cold). Scientific methods can be used to test any added effect of vitamin C above the expected regression to the mean. As an individual, you cannot really do that.

CORRELATION DOES NOT NECESSARILY = CAUSATION: Your friend gets a COVID19 vaccine. One week afterwards they get Bell’s palsy (facial paralysis condition). You had read similar stories on the internet. You therefore conclude that the vaccine caused your friends Bell’s palsy. Such conclusions are often misguided as they confuse an event that happened (vaccination) with an outcome that followed (Bell’s palsy). In this instance, Bell’s palsy occurs in hundreds of people every year. Sometimes this happens after eating a sandwich, other times people will just wake up with it, other times it’ll happen after going to the pub. Do we ever blame sandwich eating, going to sleep, or going to the pub for Bell’s palsy? No…that seems ridiculous! What epidemiologists do is look at how many people we would expect to get Bell’s palsy per year, and see if rates are higher than that after getting the vaccine. This does not mean the vaccine 100 % did NOT directly cause your friend’s Bell’s palsy, but it does mean that overall population risk is no higher with vaccination.

To highlight this point and how language can be used to make things sound scarier than they are, it is worth noting that in the Pfizer mRNA COVID19 vaccine study, the placebo (which was salty water, known as saline) group had 200 % the deaths of the vaccine group! WE SHOULD BAN SALINE! Ok, that's obviously ridiculous, right! Less hyperbolically… after vaccination, there were 2 deaths, and after the placebo there were 4 deaths. It would seem ridiculous to say that the saline caused these deaths right? Yet if this was found in the vaccine group, you bet people would be shouting about the dangers of the vaccine!

BARNUM EFFECT: You read your horoscope in the newspaper. You don’t normally do this, but you’re a bit bored with time to kill. You are shocked at how accurate the horoscope is…it describes you and your situation to a tee! Here you have tricked by the Barnum effect (also known as the Forer effect), whereby statements that fit basically anyone feel like they apply to you. Statements used in horoscopes and similar are often so vague and ambiguous that most people can interpret them in a way that fits themselves. You have filled in the ambiguities to fit yourself.

References

Carroll, H. A., Chen, Y-C., Templeman, I. S., Wharton, P., Reeves, S., Trim, W. V., Chowdhury, E. A., Brunstrom, J. M., Rogers, P. J., Thompson, D., James, L. J., Johnson, L., & Betts, J. A., 2020. Effects of 3-weeks iso-energetic plain versus sugar-sweetened breakfast on energy balance and metabolic health: A randomized crossover trial in healthy adults. Obesity, doi: 10.1002/oby.22757.

Carroll, H. A., & Hill, C. G., 2020. Effect of plain versus sugar‐sweetened breakfast on energy balance and metabolic health: A secondary data analysis stratified by participant-defined sweet preference. NutriXiv, doi:10.31232/osf.io/68h3x.

Carroll, H. A., & James, L. J., 2019. Hydration, arginine vasopressin, and glucoregulatory health in humans: A critical perspective. Nutrients, 11(6), 1201, doi: 10.3390/nu11061201.

Carroll, H. A., Templeman, I. S., Chen, Y-C., Edinburgh, R. M., Burch, E. K., Jewitt, J. T., Povey, G., Robinson, T. D., Dooley, W. L., Jones, R., Tsintzas, K., Gallo, W., Melander, O., Thompson, D., James, L. J., Johnson, L., & Betts, J. A., 2019. The effect of hydration status on glycemia in healthy adults: A randomized crossover trial. Journal of Applied Physiology, 126(2), 422-430, doi: 10.1152/japplphysiol.00771.2018.

Food and Drug Administration, 2020. Pfizer-BioNTech COVID-19 vaccine briefing document. https://www.fda.gov/media/144245/download

Morales, D. R., Donnan, P. T., Daly, F., Van Staa, T., & Sullivan, F. M., 2013. Impact of clinical trial findings on Bell's palsy management in general practice in the UK 2001-2021: Interrupted time series regression analysis. BMJ Open, 3, e003121, doi: 10.1136/bmjopen-2013-003121.

National Institute for Health and Care Excellence (NICE), 2019. Bell's palsy.

https://cks.nice.org.uk/topics/bells-palsy/

Picture source:

Carroll, H. A., Betts, J. A., & Johnson, L., 2016. An investigation into the relationship between plain water intake and HbA1c: A sex-stratified cross-sectional analysis of the UK National Diet and Nutrition Survey (2008-2012). British Journal of Nutrition, 116(10), 1770-1780. doi: 10.1017/S0007114516003688.

don't believe the hype

Copyright © 2024 don't believe the hype - All Rights Reserved.

Powered by GoDaddy